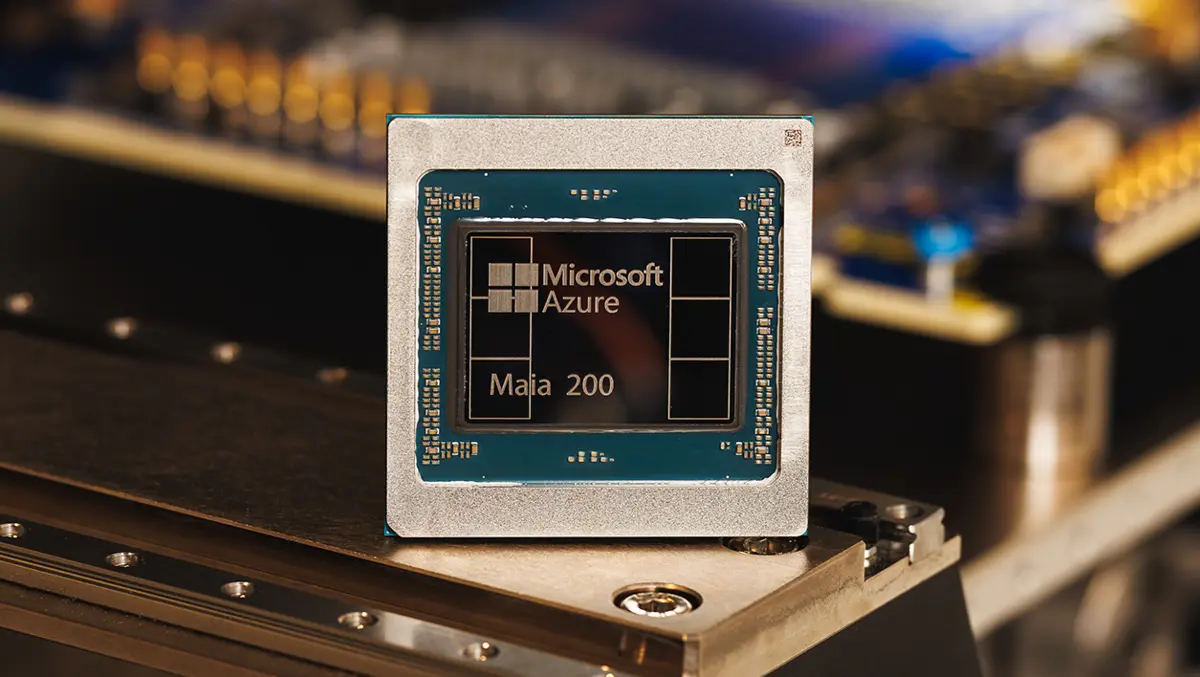

Microsoft has launched Maia 200, a new in-house AI accelerator aimed at large-scale inference workloads in its cloud and product portfolio.

The company said Maia 200 will sit alongside other processors inside its broader AI infrastructure. Microsoft said it has already deployed Maia 200 in its US Central datacentre region. It also named US West 3 as the next location for deployment.

Chip details

Microsoft described Maia 200 as an inference accelerator built on TSMC's 3nm process. The company said each chip contains more than 140 billion transistors and runs within a 750W system-on-chip thermal design power envelope.

Microsoft disclosed performance figures in low-precision formats used for AI inference. It said each Maia 200 chip delivers over 10 petaFLOPS in 4-bit precision and over 5 petaFLOPS in 8-bit precision.

The company also detailed its memory design. It said Maia 200 uses 216GB of HBM3e with 7TB/s of bandwidth, alongside 272MB of on-chip SRAM.

Microsoft positioned the launch against other custom accelerators used by hyperscale operators. The company said Maia 200 offers "three times the FP4 performance of the third generation Amazon Trainium, and FP8 performance above Google's seventh generation TPU".

Deployment plans

Microsoft said Maia 200 already runs in production in its US Central region, near Des Moines, Iowa. It said US West 3 near Phoenix, Arizona, will follow.

The company linked Maia 200 to several internal and customer-facing AI efforts. It said the first systems run new models from the Microsoft Superintelligence team. It also said Maia 200 supports Microsoft Foundry projects and Microsoft 365 Copilot.

Microsoft also said Maia 200 will serve multiple models, including "the latest GPT-5.2 models from OpenAI". The company did not provide further details on those models in the announcement.

Economics focus

Microsoft framed Maia 200 as a response to the cost of AI token generation. It said the new accelerator improves "performance per dollar" compared with hardware already in its fleet.

"Today, we're proud to introduce Maia 200, a breakthrough inference accelerator engineered to dramatically improve the economics of AI token generation," said Scott Guthrie, Executive Vice President, Cloud + AI, Microsoft.

Microsoft said Maia 200 delivers "30% better performance per dollar than the latest generation hardware in our fleet today". It did not name that hardware.

System networking

Microsoft also described changes at the system level. It said Maia 200 uses a two-tier scale-up network design built on standard Ethernet, rather than proprietary interconnects.

Microsoft said the design includes a custom transport layer and an integrated network interface card. The company said each accelerator exposes 2.8TB/s of bidirectional, dedicated scale-up bandwidth. It also said the system provides collective operations across clusters of up to 6,144 accelerators.

The company said each tray connects four Maia accelerators using direct, non-switched links. It also said it uses the same communication protocols for intra-rack and inter-rack networking through what it called the Maia AI transport protocol.

Software tools

Microsoft said Maia 200 integrates with Azure. It also said it is previewing the Maia SDK, which it described as a set of tools for building and optimising models for Maia 200.

Microsoft said the software includes PyTorch integration, a Triton compiler, an optimised kernel library, and access to a low-level programming language for Maia. The company said the SDK aims to allow model porting across different accelerators.

Internal development

Microsoft also outlined its approach to developing the accelerator and the surrounding infrastructure. It said it used a pre-silicon environment to model computation and communication patterns of large language models.

It also highlighted datacentre integration work. Microsoft said it validated complex system elements early, including the backend network and what it called its "second-generation, closed loop, liquid cooling Heat Exchanger Unit". It also said Maia 200 integrates with the Azure control plane for security, telemetry, diagnostics and management.

"This makes Maia 200 the most performant, first-party silicon from any hyperscaler, with three times the FP4 performance of the third generation Amazon Trainium, and FP8 performance above Google's seventh generation TPU," said Guthrie.

Microsoft said future regions will follow the initial deployments in US Central and US West 3.