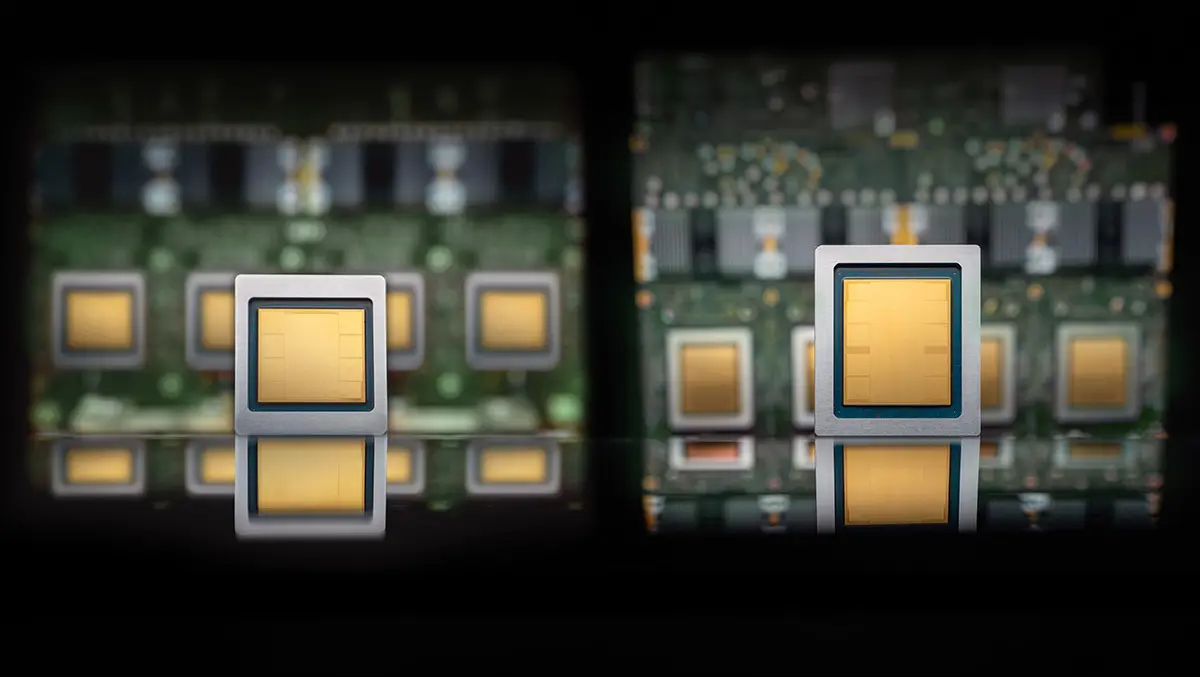

Google has introduced two eighth-generation Tensor Processing Units, TPU 8t and TPU 8i, aimed at AI training and inference workloads.

The launch marks a shift to separate designs for the two main types of AI computing task, as demand grows for systems that can train large models and run them in production at scale. Both chips will be available later this year as part of Google's AI Hypercomputer infrastructure.

TPU 8t is designed for model training, while TPU 8i is tuned for inference, particularly for AI agents that carry out multi-step tasks and interact with other models. Both processors can handle a range of workloads, but Google says specialisation improves efficiency.

For training, TPU 8t delivers nearly three times the compute performance per pod of the previous generation, Ironwood. A single TPU 8t superpod scales to 9,600 chips and includes two petabytes of shared high-bandwidth memory, with double the inter-chip bandwidth of its predecessor.

The system reaches 121 exaflops of compute and is intended to shorten the time needed to develop frontier models. Google also highlighted faster storage access, direct data transfer into the TPU, and software features designed to improve scaling across very large clusters.

TPU 8t targets more than 97% goodput, a measure of productive compute time. To support that, Google has built in telemetry across large chip deployments, automatic rerouting around faulty links, and optical circuit switching that can reconfigure hardware when failures occur.

Inference focus

By contrast, TPU 8i is aimed at latency-sensitive inference. Its design addresses memory and communication bottlenecks that can slow the response times of large models, especially when many AI agents operate together.

The chip includes 288GB of high-bandwidth memory and 384MB of on-chip SRAM, three times more than the previous generation. It also doubles the number of physical CPU hosts per server and uses Google's Axion Arm-based CPUs.

For Mixture of Experts models, which split work across specialised model components, TPU 8i doubles interconnect bandwidth to 19.2Tb/s. A new Boardfly architecture reduces the maximum network diameter by more than 50%, while an on-chip Collectives Acceleration Engine cuts latency in global operations by up to five times.

Google says these changes give TPU 8i an 80% improvement in performance per dollar over the previous generation, allowing businesses to serve nearly twice the customer volume at the same cost.

Broader strategy

The two-chip approach reflects a broader bet that AI infrastructure is moving towards more specialised systems. Google says it anticipated higher demand for inference several years ago as large AI models moved from research into production use, and concluded that separate chips for training and serving would better match those workloads.

The strategy also reflects an effort to tie silicon design more closely to model requirements. TPU 8t and TPU 8i were developed with Google DeepMind, with network, memory, and software choices shaped by the needs of reasoning models, trillion-parameter training, and production-scale deployment.

Both platforms support JAX, MaxText, PyTorch, SGLang, and vLLM. Customers will also be able to access the hardware without virtualisation overhead through bare-metal options. Google is extending its in-house design work beyond accelerators to the full server stack by using its own Axion CPU hosts across both systems.

Power constraints

The announcement comes as energy use has become a central issue in AI infrastructure. Google says TPU 8t and TPU 8i deliver up to twice the performance per watt of Ironwood, helped by power management that adjusts draw according to demand.

Its data centres now deliver six times more computing power per unit of electricity than five years ago, according to the company. Both new TPU systems use Google's fourth-generation liquid cooling technology, which it says allows higher performance densities than air cooling.

The emphasis on efficiency highlights one of the main pressures facing cloud providers and AI developers. As model size and inference demand grow, access to electricity and cooling is becoming as important as access to chips.

Amin Vahdat, Senior Vice President and Chief Technologist, AI and Infrastructure at Google, said: "Several years ago, we anticipated rising demand for inference from customers as frontier AI models are deployed in production and at scale. And with the rise of AI agents, we determined the community would benefit from chips individually specialized to the needs of training and serving."